Best Business Books 2012: Biography

Virtuosity Squared

(originally published by Booz & Company)

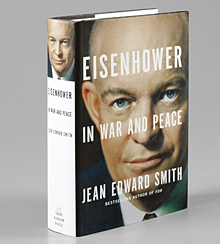

Jean Edward Smith

Eisenhower in War and Peace

(Random House, 2012)

Walter Isaacson

Steve Jobs

(Simon & Schuster, 2011)

Mark Kurlansky

Birdseye: The Adventures of a Curious Man

(Doubleday, 2012)

Great ideas often emerge from the collision of two disciplines. So, it seems, do great leaders. The subjects of this year’s best biographies — Dwight Eisenhower, who led two of the world’s largest organizations, the Allied forces in Europe during World War II and the U.S. government; Steve Jobs, who built the world’s most valuable company; and Clarence Birdseye, a self-taught biologist who pioneered a technology that revolutionized food production — each illustrate how often individual success is rooted in a merging of disciplinary virtuosity.

Eisenhower combined political genius with superb executive skills. Jobs wed the sensibility of an aesthete to an innovator’s appreciation of technology. As for Birdseye, he once said that he “was not cut out for a career in pure science and wanted to get into some field where [he] could apply scientific knowledge to an economic opportunity.”

Politics and Management

Eisenhower in War and Peace, Jean Edward Smith’s powerful story of the 34th U.S. president, is my choice for the best biography of the year. Dwight David Eisenhower was as close to a man for all seasons as we have had among presidents. He successfully led the military, the government, and a university.

Smith, a political scientist, historian, and political economist, is well prepared to tackle Eisenhower’s life, having previously written biographies of Franklin D. Roosevelt and Ulysses S. Grant, the latter another general who won a war by overwhelming force. Eisenhower can be read as a chronicle of World War II, a presidential coming-of-age story, or a portrait of the United States as an emerging global superpower. Business readers, though, should also regard it as an outstanding case study in leadership; in an alternative universe, one cannot imagine Eisenhower running General Motors Company into bankruptcy.

Smith portrays Eisenhower as a decisive yet thoughtful leader who had a genius for manipulating written and unwritten rules, bureaucracy, and social maps. He made the U.S. presidency look so easy that “Ike” himself has receded into a faint image of an avuncular leader who presided over a dull, prosperous era. Smith corrects numerous errors in accounts by others and gives us Eisenhower in full: not only the most popular president in modern U.S. history, but also one of the most effective.

Eisenhower ended the unwinnable war in Korea, Smith reminds us, “with honor and dignity,” and he sent the Seventh Fleet to protect Formosa from invasion by China. On the domestic front, he tamed inflation, balanced the federal budget, and quelled unemployment with the massive public works project of building the interstate highway system. Eisenhower unwound the excesses of McCarthyism, ended military foot-dragging over desegregation, and appointed the judge who gave Rosa Parks her seat in the front of the bus. In one of his most difficult decisions, he sent the 101st Airborne Division to Little Rock to put down defiance of a court order to desegregate the schools. Of course, he also made mistakes — we have Eisenhower to thank for the CIA coup that overturned a government in Iran, with repercussions still felt today.

Perhaps Eisenhower’s most significant legacy, though, was to show the value of cooperation and restraint. He thawed the Cold War, beat back attempts at gunboat diplomacy by U.S. allies, and worked easily across party lines. He also crushed two attempts by the National Security Council to use atomic weapons after World War II, insisting on a policy of deterrence instead.

Nothing in Eisenhower’s early life pointed to his brilliant future. He was born in 1890, the third of seven sons raised by a pair of religious eccentrics in Abilene, Kan. With no funds to attend college, Eisenhower made the most of a lucky break — the beginning of a lifetime pattern — when he won a competitive examination for an appointment to West Point, an opportunity usually given to those with political connections.

At West Point, Eisenhower graduated 61st out of 164 cadets in his class, where he was known mostly for his practical jokes and football skills. His early military career was undistinguished until he took a course in the Army’s first tank school and realized the new technology would revolutionize battle tactics. As a result, less than three years out of West Point, Eisenhower was charged with creating the first stateside tank training facility at Pennsylvania’s Camp Colt, where he commanded 10,000 men and 600 officers. There, Eisenhower’s logistical skills brought him to the attention of the War Department.

Smith is adept at painting the picture of this and other episodes that illustrate concretely how Eisenhower learned to be a leader through a combination of management skills and personal and political diplomacy. It was at Camp Colt that Eisenhower first displayed a talent for befriending powerful people, many of whom were his diametric opposites. One of the delights of Eisenhower lies in following the maneuvers of the man’s lifelong campaign to captivate everyone who crossed his path. The egotistical, flamboyant George Patton was Eisenhower’s first major conquest, and Patton promptly handed him another by introducing him to Brigadier General Fox Conner.

Conner wielded immense power as chief of staff to General John J. Pershing, who had led U.S. forces in World War I. Conner became entranced with Eisenhower, and trained him in military history, psychology, and “the art of persuasion.” In a dramatic example of the power of mentorship, he rescued Eisenhower from trouble, intervened on his behalf over and over, and arranged for him to work directly for Pershing.

Luck by all accounts featured prominently in Eisenhower’s career — so much so that Patton declared his initials D.D. stood for “Divine Destiny.” Yet Smith illustrates repeatedly that Eisenhower advanced because he never wasted his opportunities. Under Pershing, he exhaustively studied the battlefields of World War I. He graduated first in his class after winning admission to the exclusive Army War College. His staff work sent him to key strategic posts in Paris, the Philippines, and the Panama Canal Zone. In another lucky stroke, Eisenhower was put in charge of creating a wartime mobilization plan that brought him into contact with financiers and businessmen.

By the start of World War II, Eisenhower had served in the military for 27 years. His career progress was glacial by the standards of today’s wireless world. Yet one of Smith’s insights is that the military’s then rigid promotion system gave its officers experience and authority, which encouraged independence of thought. When George Marshall chose Eisenhower as chief of the army’s war plans division in 1942, Eisenhower was a protégé of nearly every important army general officer, and understood mobilization in a European theater better than anyone.

Smith details Eisenhower’s ascent from staff officer to wartime leader of the Allied forces as a triumph of executive ability, political acumen, and judgment honed through harsh experience of battles barely won. Eisenhower was a weak strategist. His skill at building consensus served him poorly as a field commander, but helped him become a military statesman who held together a fractious alliance that included FDR, Winston Churchill, Joseph Stalin, Charles de Gaulle, and a handful of strong-willed generals. The toughest, loneliest decision Eisenhower faced was whether Allied forces should cross the English Channel on June 6, 1944. Smith’s account of Operation Overlord is absorbing both as a military story and as a personal drama. Eisenhower staked his career on the decision to launch D-Day, and it led to the victory that catapulted him into the White House.

Eisenhower is an evenhanded account that reveals the sources and reasoning behind its conclusions, a signature of a great historian and confident researcher. Smith does not shy from showing Eisenhower’s flaws, including a fundamental impenetrability that occasionally turned to coldness. Smith lays out his passionate wartime affair with his bright, attractive British driver, Kay Summersby. While Eisenhower contemplates marrying Summersby, he gushes a flood of insincere letters to his wife, Mamie: “I desperately miss you.…” Then he drops Summersby by sending her an impersonal note when he returns home after the war to re-embrace Mamie — and his ambitions. “George Patton would have said a warmer goodbye to his horse,” notes Smith.

After the war, Eisenhower, who claimed he wanted to semi-retire and live on a farm, took on high-profile work while Harry S. Truman finished out his second term as president. He set Columbia University’s fiscal house in order as its president and served as supreme commander of NATO. When calls for him to run for president reached a crescendo, Eisenhower set what must be a record for coyness by lingering in Europe with NATO rather than filing in the early primaries. His sponsors — determined as always — won him the nomination through a brokered convention that resembled a coup. Once more, Eisenhower made the most of his opportunity; during the eight years he spent in the White House, his legacies multiplied as fast as his popularity ratings rose.

Smith’s magisterial book sparkles throughout with lessons from Eisenhower’s life and career. Late in his years, the former president warned that the U.S. must “avoid becoming a community of dreadful fear and hate, and be, instead, a proud confederation of mutual trust and respect.” Forging these confederations was how Eisenhower changed the world. It’s a worthwhile lesson to consider as we face the challenges of our own age.

Aesthetics and Technology

Walter Isaacson opens Steve Jobs with the tale of a wavering courtship in which Jobs first seeks him out to write his biography, then becomes skittish, and finally recommits when his pancreatic cancer advances and it is clear Jobs’s story will soon end. Jobs spoke openly to Isaacson of his enemies, friends, erstwhile friends, and, to a lesser degree, himself. Others filled in the rest of the portrait of one of technology’s most charismatic titans — along with providing a much-needed check on Jobs’s tendency to create his own reality.

Jobs saw his importance in his ability to stand at the intersection of the humanities and the sciences, a theme that he suggests and Isaacson adopts for the biography. By this, Jobs did not mean he simply stood there; he plainly saw himself as transmuting these disciplines through the alchemy of his genius into a perfected whole. (Jobs never claimed to be modest.) He cared about the tiniest details and relied on a powerful intuition to bring emotional resonance to designs that he insisted be executed flawlessly. Trying to copy Jobs, one source observes, would be like trying to copy Picasso by using red paint. In fact, the lessons in his story are most powerful when considered as a cautionary tale.

Steven Paul Jobs was born in 1955, the son of an unmarried Wisconsin university student and a Syrian Muslim teaching assistant, who put him up for adoption. His adoptive father, Paul Jobs, a high school dropout with a passion for mechanics and woodworking, instilled in his son an appreciation for the sanctity of craftsmanship. Growing up in what would become Silicon Valley, the boy was surrounded by friends whose parents were engineers.

To his credit, Isaacson makes clear that Jobs was by no means destined for greatness. Had he been raised as a Syrian–American by his “dreamy, peripatetic” birth mother in Wisconsin, there is no telling how his life would have turned out. As it did transpire, Jobs often was his own worst enemy. This point is made unmistakably, and entertainingly, through accounts of his bohemian, bizarre, selfish, willful, and cruel behavior.

Early on, Jobs forces his much-loved adoptive parents to cripple themselves financially by sending him to expensive Reed College — then bristles at the concept of a curriculum, drops out after six months, hangs around auditing classes that suit his aesthetic tastes (such as modern dance), and lives on money scrounged by cashing in soda bottles for deposits. (Eventually, he gave his parents Apple stock.) The younger Jobs trips on LSD, pirates Bob Dylan tapes, flirts with the Hare Krishna movement, and refuses to bathe. At one point, a Hindu holy man in the Himalayas spots, or perhaps smells, Jobs, and grabs him in order to lather and shave him. Unfortunately, the lesson did not stick.

Jobs would later attribute much of his success to this period, even making the preposterous claim that had he not audited a calligraphy class, “it’s likely that no personal computer” would have had multiple typefaces or proportionally spaced fonts. Fortunately, he soon began working with Steve Wozniak, a former high school classmate who had a sizable tolerance for grandiosity. The two had bonded as teenagers over an idea spotted in an Esquire article by Wozniak’s mother that described how hackers pirated free phone calls. Wozniak built a circuit that could control AT&T’s routers, which Jobs figured out how to package and market at a 78 percent profit margin. This experience taught the two teenagers they could “control billions of dollars’ worth of infrastructure,” writes Isaacson. It also, says Wozniak, “gave us a taste of what we could do with my engineering skills and his vision.” It was the first of many visions based on breaking rules.

Reunited in 1976, the pair founded Apple Computer in the proverbial garage with US$1,300, with Wozniak as engineer and Jobs in the role he would eventually settle into permanently: visionary and promoter. Jobs’s journey as a manager and business partner covered much rougher ground. Among the many unfortunate episodes are his ungracious treatment of Wozniak and his love–hate (mostly hate) relationship with Bill Gates, who was generous in his comments about Jobs for the book, only to be repaid with insults. Jobs’s greatest business error took place when Apple went through a Silicon Valley rite of passage, the transition from the founder–CEO to professional management. Jobs lost the confidence of CEO John Sculley through his insistence on (mis)managing the Macintosh division, which led to his ouster as chairman. But being rejected by his own brainchild at age 30 focused Jobs on what mattered. The ensuing years brought the NeXT computer; animation by Pixar; and eventually, a second chance at Apple that yielded iTunes, iPhoto, the iPod, the iPhone, and the iPad.

Isaacson details these stories as evolutionary rungs on a ladder of creativity. One innovation follows another, as Jobs and his company ascend to iconic heights. Each of these products also came to market accompanied by lots of collateral damage. Jobs’s relationships in business were complicated by the fact that he was, as a contemporary describes, “full of broken glass” as a result of his early abandonment to adoption. He was “the opposite of loyal…anti-loyal,” according to a colleague: a tyrant at work and a “frighteningly cold,” rejecting narcissist in his personal life, who attracted people through brief displays of interest, only to mistreat and abandon them.

Implicitly, Steve Jobs raises the question of whether indifference to social norms and a degree of madness are requirements of creative genius. As he rises, Jobs lives on fruit to ward off mucus, soaks his feet in the toilet to relieve stress, offends his colleagues with his filthy body, falls into fits of tears during business meetings, and turns orange from eating only carrots.

In a sense, Jobs is the un-Eisenhower. He is indifferent to working through procedures and following rules, including the most basic rule of acknowledging reality, which Isaacson describes in multiple breathtaking scenes of lying and self-deception. Seductive as a Svengali-like, “mesmerizing but corrupt” preacher, Jobs enchants business partners into making deals that he revokes on a whim. Outraged at the thought of anyone stealing his ideas, he takes pride in pilfering intellectual property from Xerox. Stopped for speeding, he honks at the policeman for not writing the ticket fast enough. Because Jobs’s rule-breaking attitude is part of his success, these stories are amusing, up to a point. But when he horrifies his friends and family by refusing conventional medical treatment for a curable form of cancer, his willfulness becomes a tragic flaw.

Jobs’s lack of introspection complicated Isaacson’s task. (At one point, he simply ignores a question about why he felt a kinship with two belligerent, driven — and doomed — fictional characters, King Lear and Captain Ahab.) Steve Jobs also was completed while Jobs was dying, and published a few weeks after his death. One can’t help but wonder how the timing affected the interviews, as well as what fruit might remain on the tree in the form of sources who did not cooperate.

The biographer’s portrait, despite stories and commentaries that soften the edges, is of Jobs as detestable genius. His charisma apparently made some people loyal to him. But Jobs, in his own words as quoted in the book, is anything but charismatic, which means readers who encounter him on paper are unlikely to feel it.

Fifty years from now, when the iPad and the iPhone are superseded, what will people remember about Steve Jobs? His crystalline focus. His defining taste. His perfectionism. His intuitive salesmanship. And a pragmatic streak that expressed itself in understanding design from the user’s point of view. Jobs admired the titans of industrial design, people like Raymond Loewy. In the end, he became one of them, and more, because he also had the will and the wiles to forge his creative genius into the world’s most valuable company.

Science and Business

Mark Kurlansky, author of Cod: A Biography of the Fish That Changed the World (Walker & Co., 1997) and Salt: A World History (Walker & Co., 2002), likes to take his readers down the mineshaft into narrow subjects — in this case, the life of an unusual man — and use those subjects to unearth hidden realms in the commercial universe. Here, he also fills an important niche by documenting the life of an entrepreneur who changed the world.

Most people think the Birds Eye brand emblazoned on packages of frozen vegetables has something to do with an actual bird. But Kurlansky tells us otherwise. In Birdseye: The Adventures of a Curious Man, he relates the story of Clarence Birdseye, the man who changed the way the world eats by figuring out how to flash-freeze food on an industrial level. Thanks to Birdseye, by the 1930s, people who previously had lived on mushy canned goods in the winter were enjoying fresh-tasting food year-round.

Ever since childhood, Birdseye was an amateur naturalist who kept his eye on turning a profit from his hobby. Born in Brooklyn in 1886 to a prominent and wealthy family, he encountered the wild as an 8-year-old when his family bought a farm on Long Island. Two years later, he trapped a dozen live muskrats and shipped them to a customer he found in England. While attending college at Amherst, he sold frogs to the Bronx Zoo for reptile chow, and collected rats of a nearly extinct species from behind a butcher shop to sell to a geneticist.

Birdseye was forced to drop out of Amherst when his parents fell into financial distress around 1908, during one of the worst banking and economic crises in U.S. history. He took a job with the U.S. Biological Survey counting coyotes in New Mexico and Arizona and hunted the ticks that caused Rocky Mountain spotted fever, but eventually his commercial instincts led him into fur trading. After collecting bobcat and coyote skins in the western U.S., he moved north to search for fox and ermine pelts by dogsled in Labrador.

Birdseye entertains readers with stories of its subject’s consumption of delicacies as varied as skunks and horned owls, a particular favorite being fried rabbit livers. Birdseye was omnivorous and obsessed with food, and in the frozen North, a “hunger for the taste of freshness had a lasting effect,” Kurlansky writes.

It was in Labrador that Birdseye discovered the flash-freezing process that would transform food production. Kurlansky keeps the story moving, although the second half of the book, which details how Birdseye invented the freezing equipment and brought his product to market, is necessarily dry compared to the account of the early years in Labrador. A highlight is Birdseye’s aptitude as a promoter, which was essential to winning over resisters, whether culinary conservatives or people suspicious that the new technology was tampering with God’s intentions. By the mid-1940s, U.S. households were convinced: In 1945 and 1946, they bought 800 million pounds of frozen food.

Birdseye’s success came from a marriage of two qualities. He needed his business skills to make his scientific ambitions a reality, just as Jobs needed his aesthetic sense to ignite his technological visions and Eisenhower needed his political genius to lever his executive ability. Birdseye died at age 69 in 1956, a year after Jobs was born. The business he built had become so ubiquitous that the man himself was forgotten. It took 50 more years for Kurlansky to arrive and recognize the need for a biography.

To be great, a biography must do more than tell us an interesting life story; it must teach us something new and worthwhile about ourselves or the world. This year’s best biographies do just that: They illuminate realms forgotten and unknown, and contain lessons that range from inspirational to cautionary. Above all, the lives of Eisenhower, Jobs, and Birdseye give us a collective portrait of the enormous potential that can be unleashed when two fundamental, and even opposing, skills are combined in a single human being. ![]()

Author profile:

- Alice Schroeder is an investor, journalist, and best-selling author of The Snowball: Warren Buffett and the Business of Life (Bantam Books, 2008), selected as a 2009 s+b best business book.